Artificial intelligence is no longer experimental

Artificial intelligence is no longer experimental. It is used in many fields, including corporates, HR, healthcare diagnostics, education platforms, and public services. Decisions once made by humans are now increasingly shaped or fully determined by algorithms. This shift brings efficiency and scale. But it also introduces legal risk, ethical blind spots, and accountability gaps that many organisations underestimate.

Responsible AI is not about slowing innovation. It is about ensuring that AI systems remain lawful, fair, explainable, and aligned with human values, especially when their decisions affect people’s lives.

This article explores the legal, ethical, and often overlooked “dark side” of AI adoption, and why organisations must take governance seriously before harm occurs.

What Is Responsible AI?

Responsible AI refers to the design, deployment, and governance of AI systems in a way that prioritises human rights, transparency, fairness, and accountability.

At its core, Responsible AI asks difficult but necessary questions:

- Who is accountable when an AI system makes a harmful decision?

- Can the decision be explained to the person affected?

- Was bias introduced through data, design, or deployment?

- Does the system comply with existing laws and emerging regulations?

Without clear answers, AI becomes a liability, not an advantage.

Third, outsourcing does not outsource risk. Using third-party AI tools, SaaS platforms, or external vendors does not shield an organisation from responsibility. If your organisation benefits from the system, it also owns the consequences.

AI Does Not Remove Responsibility

One of the most dangerous assumptions organisations make is that AI somehow “absorbs” responsibility. In practice, AI shifts responsibility upward, not away.

When an AI system makes a decision that affects a person, whether it is a hiring rejection, a denied loan, a flagged transaction, or a performance evaluation, the organisation deploying that system remains legally accountable for the outcome. Courts and regulators do not recognise algorithms as independent decision-makers. They recognise organisations, boards, and executives.This creates several legal realities that leaders must confront:

First, existing laws already apply. Most AI risks fall under current legal frameworks such as employment law, consumer protection, data protection, professional negligence, and administrative law. Waiting for “AI-specific laws” before acting is a strategic mistake.

Second, lack of intent does not eliminate liability. An organisation does not need to intend discrimination or harm for liability to arise. If an AI system produces unfair or unlawful outcomes, the impact matters more than the intention.

Finally, documentation matters. When something goes wrong, regulators will ask:

- Why was this system chosen?

- What risks were identified before deployment?

- What safeguards were put in place?

- Who approved its use?

Organisations that cannot answer these questions clearly are already exposed.

The Ethics Problem

Ethical failures in AI rarely begin with malicious intent. They usually start with convenience, speed, or cost-saving decisions that go unquestioned. The main ethical challenge of AI is that capability often outpace judgment. Just because a system can analyse behaviour, predict outcomes, or optimise decisions does not mean it should be allowed to do so without limits. Several ethical tensions repeatedly emerge:

One is a power imbalance. AI systems often operate invisibly, while the people affected by them have little insight or ability to challenge decisions. This imbalance undermines fairness and trust.

Another is context loss. Algorithms are trained on patterns, not lived experiences. They struggle with nuance, exceptional cases, and human complexity, yet their outputs are often treated as objective truth.

There is also ethical drift over time. A system that appears acceptable at launch may become harmful as data changes, business goals evolve, or usage expands beyond its original scope.

Perhaps most importantly, ethics failures often arise when human judgment is quietly removed from the loop. When people stop questioning AI outputs, ethical oversight collapses.

Ethical AI is not about avoiding technology. It is about ensuring that human values remain in control of automated systems, especially when the stakes are high.

Moving Forward: From Awareness to Action

Awareness of AI risk is no longer enough. Most organisations now recognise that AI introduces legal and ethical challenges. The real test is whether they act on that understanding.

Moving forward requires shifting from reactive thinking to deliberate governance.

This means:

- Mapping where AI is used across the organisation

- Assessing which systems affect people, rights, or opportunities

- Introducing human oversight where impact is high

- Training leaders and teams to question AI outputs, not just consume them

- Reviewing systems regularly, not only at launch

Responsible AI is not a one-time project. It is an ongoing discipline. Organisations that invest early in governance, accountability, and ethical decision-making will not only reduce risk, but they will also build trust with employees, customers, and the public. Those who ignore these responsibilities may find that the cost of fixing harm later far exceeds the cost of doing things right from the start.

Conclusion

AI is no longer a future consideration. It is already shaping decisions that affect people’s careers, access to services, financial outcomes, and personal rights. As AI becomes more deeply embedded in organisational systems, the risks associated with poor governance grow just as quickly as the benefits of automation.

Responsible AI is not about fear or resistance to technology. It is about recognising that power without accountability creates harm. Legal responsibility cannot be delegated to algorithms. Ethical responsibility cannot be automated away. And trust cannot be rebuilt after damage has already occurred.

Organisations that treat AI as a purely technical tool will continue to face legal exposure, ethical failures, and public backlash. Those who treat it as a governance and leadership challenge will be better equipped to use AI safely, fairly, and sustainably.

The question is no longer whether AI should be used, but how it is governed, who is accountable, and what safeguards are in place when things go wrong. The organisations that address these questions now will lead with confidence. Those who delay will be forced to respond under pressure.

Bonus

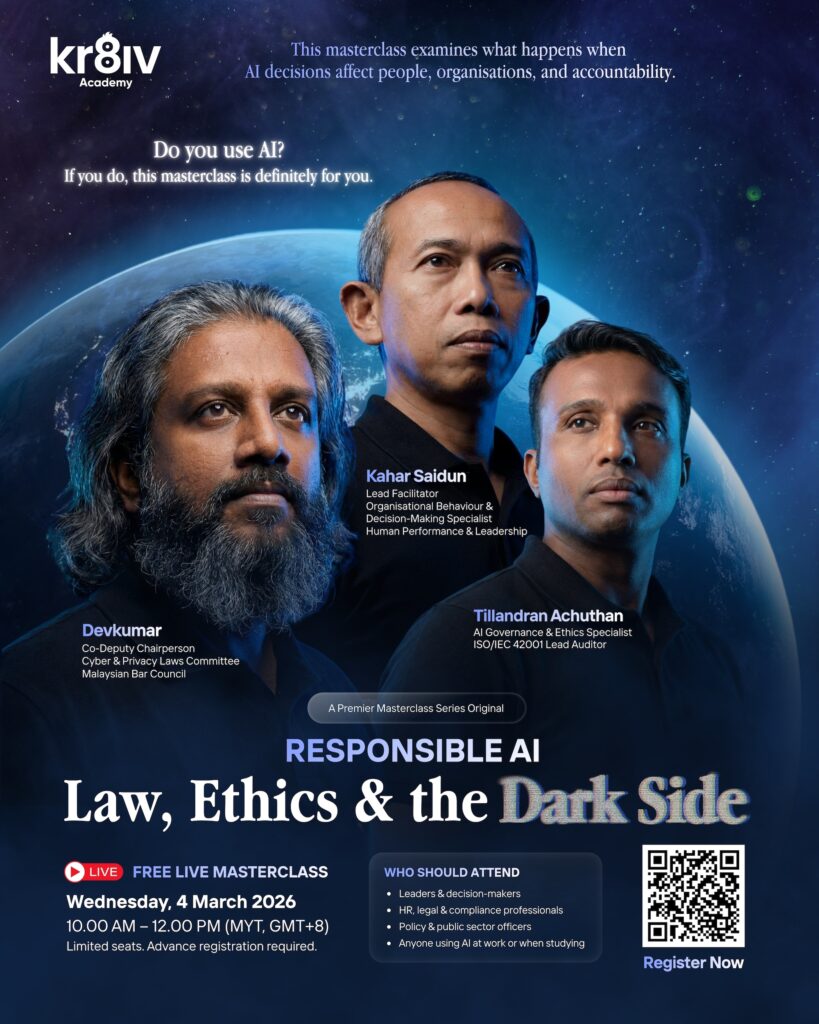

As organisations continue to adopt AI at scale, understanding responsibility, ethics, and governance is becoming a leadership necessity rather than a technical choice. To explore these issues in more depth, Kr8iv Academy is hosting a free live masterclass on Responsible AI, focused on the legal realities, ethical risks, and accountability challenges discussed in this article.

The session is designed for leaders, HR, legal, compliance, and professionals working with AI-enabled systems who want practical clarity on responsible adoption. The masterclass will take place on

Wednesday, 4 March 2026, from 10:00 AM to 12:00 PM (MYT), with limited seats available. Join it here